You open a weather app on your phone. It pulls the latest forecast in seconds. That quick data grab? It starts with an API call. APIs act as messengers. They let your app talk to a distant server and fetch fresh info.

This process feels instant. In reality, it covers a full journey from your device to the server and back. Modern setups use HTTP/3 and edge computing. These cut delays to 50-200 milliseconds on average. Edge spots even hit under 10ms. You get smooth apps because of smart routing and caching.

Knowing this journey helps developers debug issues. Users appreciate faster loads too. Next, we trace the path step by step. From building the request to the reply racing home.

How Your App Builds and Launches the Request

Your app starts by crafting a precise message. It picks details like the HTTP method and URL. Then it adds headers and maybe a body. This prep happens on your device or browser.

First, choose GET for reads or POST for sends. A URL like https://api.weather.com/v1/forecast?city=NYC points to the endpoint. Headers set rules. They include Content-Type: application/json or an API key in Authorization: Bearer token.

For auth, apps use OAuth or JWT tokens. These prove you have access. Bodies carry data in JSON, like {"location": "NYC"}. Compression with gzip shrinks payloads. Caching headers like If-None-Match check for fresh data.

Once ready, the app sends it out. But first, it needs the server’s IP address. That’s where DNS steps in.

Picking the Right Details for Your Message

Apps build requests with care. The method tells the server the action. GET fetches data. POST creates new entries.

URLs combine base paths and params. For example, /v1/users?id=123 targets specifics. Headers add context. Check best practices for API headers and auth. They cover types, keys, and tokens.

A simple POST might look like this:

POST /api/posts HTTP/1.1

Host: example.com

Authorization: Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...

Content-Type: application/json

{"title": "Hello World"}

Tokens go in headers for security. Never expose them in URLs. This setup keeps things safe and efficient.

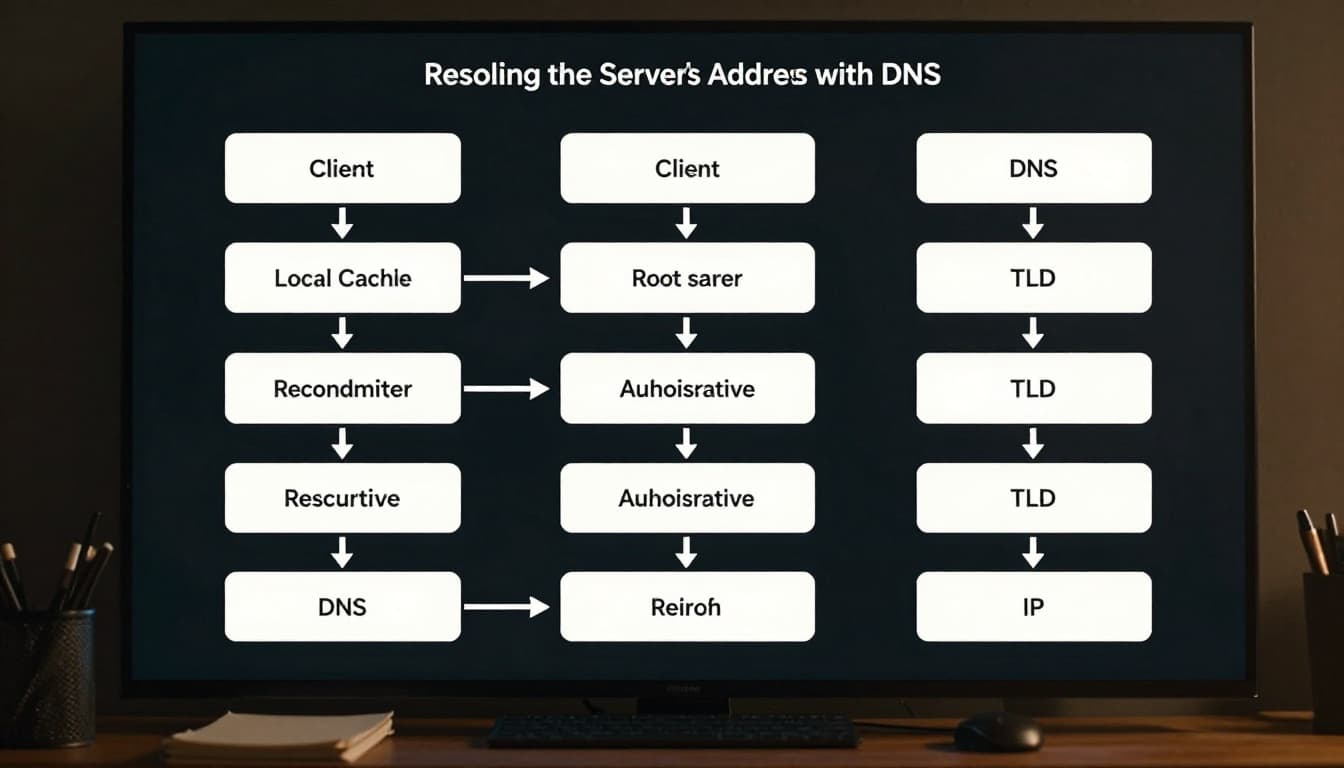

Resolving the Server’s Address with DNS

DNS turns api.example.com into an IP like 192.0.2.1. It starts local. Your device checks its cache first.

No hit? It asks a recursive resolver, often from your ISP. That resolver queries root servers. They point to TLD servers like .com. TLDs direct to authoritative servers. Those hold the final IP.

The chain caches results along the way. This speeds repeats. For details on each step, see Cloudflare’s DNS guide.

Think of it as a phone book chain. You ask friends first. Then libraries. Finally, the source. In 2026, DNS-over-HTTPS adds privacy.

The Secure Dash Across the Internet

Now the request dashes out. It travels networks via TCP or QUIC. TCP builds reliable links with a three-way handshake: SYN, SYN-ACK, ACK.

QUIC runs on UDP. It skips steps for speed. HTTP/3 uses QUIC. By March 2026, HTTP/3 powers 38.7% of sites. QUIC cuts head-of-line blocking. Multiple streams flow without one loss halting all.

Anycast routes to the nearest edge. TLS 1.3 encrypts everything. Clients send “ClientHello” with key shares. Servers reply fast. Forward secrecy keeps sessions safe.

This dash takes milliseconds. Road trips help picture it. TCP is a steady highway with checkpoints. QUIC offers express lanes that dodge jams.

Establishing a Safe Connection

TCP handshakes ensure order. But losses pause streams. QUIC recovers per stream. See HTTP/3 vs TCP comparisons.

TLS 1.3 needs one round trip. Client offers ciphers. Server picks one. They compute shared keys. No weak RSA here. All use ECDHE for secrecy.

Encryption hides payloads. Even metadata stays tough to crack. In 2026, most traffic runs this way.

Server Arrival: From Edge to Deep Processing

The request hits an edge node first. CDNs like Cloudflare check caches. Redis stores hot data. Hits return instantly.

No cache? Load balancers pick a backend. They use health checks and round-robin. Anycast sends to closest servers.

Auth validates tokens. Rate limits block floods, like 429 errors. Validators parse JSON schemas.

Logic runs next. Orchestrators call services. Databases use connection pools. In 2026, AI agents handle queries. They build pipelines and fix issues.

Factory lines fit here. Edge inspects packages. Balancers route. Workers process. AI tweaks flows.

First Stops: Edge Caching and Load Balancing

Edge nodes cut trips. They cache with ETags. Misses go deeper. Balancers spread load. Health checks drop sick servers.

Cloudflare’s tools shine here. Their Dynamic Workers beta spins tasks at edges.

Checking Permissions and Running the Logic

Servers verify keys first. JWTs decode fast. Then validate inputs.

Business code pulls data. Pagination limits results. AI workflows now manage 30% of calls. Agents orchestrate without humans.

Errors trigger logs. 500s mean server faults. All traces metrics for tweaks.

Response Comes Home with Speed Boosts

Servers craft replies quick. Status like 200 OK leads. Headers set Cache-Control: max-age=3600. CORS allows cross-sites.

JSON bodies pack data. Errors explain issues, like 400 for bad requests.

Caching layers speed returns. Edges store copies. Browsers use service workers. ETags check changes.

Idempotency keys safe retries. gRPC speeds internals. AI monitors patterns.

Test with curl: curl -H "Authorization: Bearer token" https://api.example.com/posts. Full loops hit 50-200ms. Edges crush that.

Crafting and Speeding Up the Reply

Responses mirror requests. Headers guide browsers. Caching stacks multiply speed.

Modern flows use QUIC. No blocking means parallel streams fly.

Your weather app updates. The cycle closes fast.

That weather pull you started? It now shows fresh data. APIs make it seamless.

Key steps include request build, DNS, secure send, edge checks, logic, and cached reply. You see why speeds thrill at 50-200ms.

Debug with curl or Wireshark. Embrace QUIC and AI edges. Build faster apps today. Share your API stories below.